TL;DR

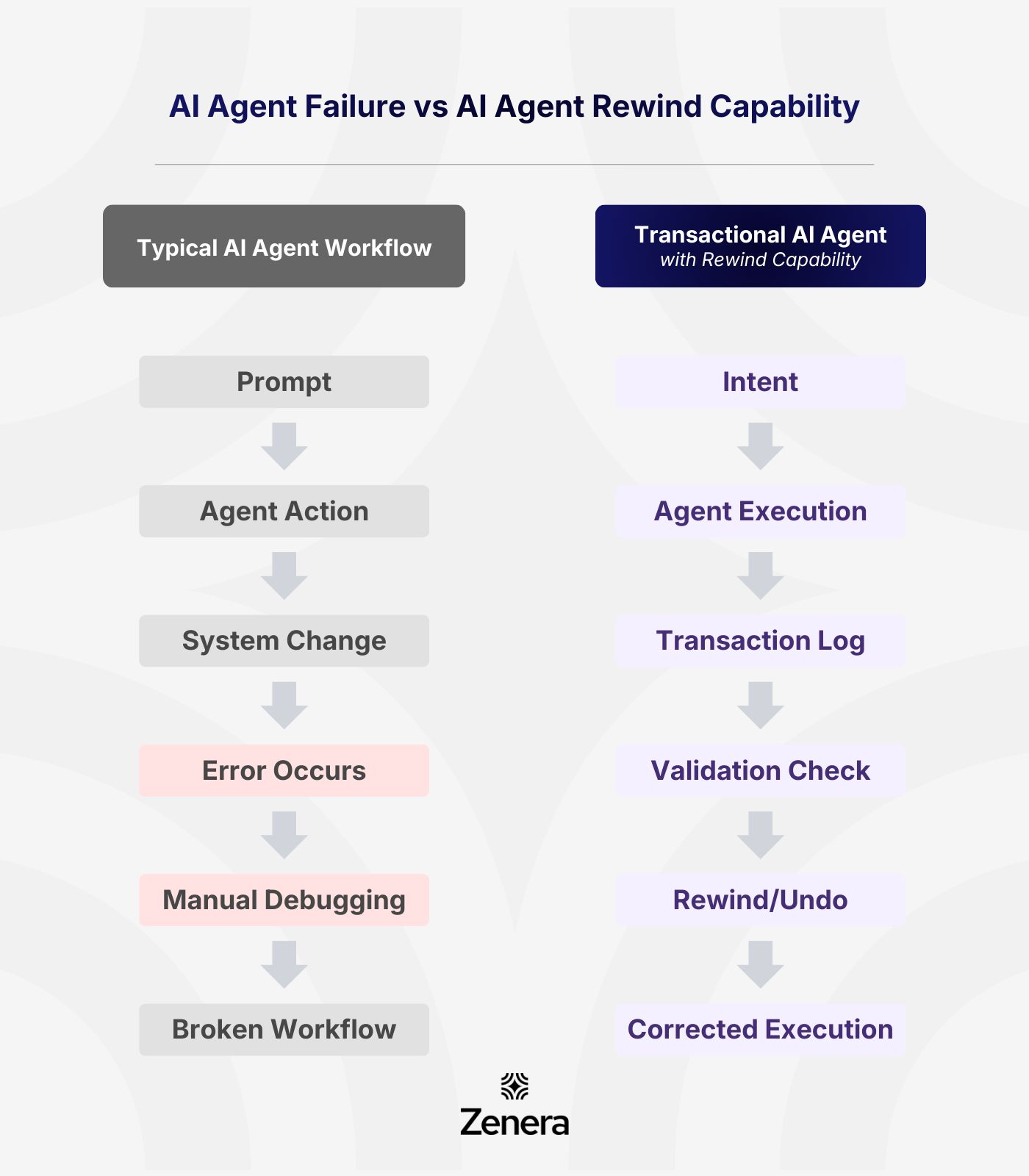

What happens when an agent makes the wrong decision

Most agent frameworks have no safe rollback mechanism

Enterprise systems require transactional integrity and rewind capability

Zenera’s transaction-aware agents with controlled undo and correction

The Problem Nobody Talks About with AI Agents

Agentic AI is growing quickly. Everyone is talking about their capabilities.

Agents can now:

write code

orchestrate workflows

call APIs

update systems

execute multi-step tasks

That’s powerful.

But there’s a question most of us are avoiding:

What happens when the agent gets something wrong?

We all know that in enterprise environments, agents don’t just generate text.

They affect real transactions. They can modify configurations, update records, and trigger operational workflows.

If an agent executes the wrong step, the consequences can cascade across systems.

The reality is that most agent frameworks today don’t solve this problem.

They assume the agent is right.

Or they rely on crude mechanisms like version rollbacks.

That might work for generated code or a website.

It doesn’t work for enterprise systems.

Why Enterprise Systems Need Rewind

Enterprise software has always relied on transactional integrity.

Think about a simple banking example.

If money is debited from one account, it must also be credited to another. Only when both actions succeed is the transaction complete. If something fails halfway, the system reverses the entire transaction.

AI agents operating inside enterprise systems need the same discipline.

Without it:

Agents can partially execute workflows

Systems can enter inconsistent states

Debugging becomes extremely difficult

This is where most AI frameworks fall short.

They generate actions but don’t manage safety.

Introducing the ‘Rewind’ Capability

One concept that is at the core of Zenera is transaction-aware agents.

Every action an agent performs is recorded as part of a structured transaction.

That means:

Actions can be validated before completion

Logic paths can be inspected

Incorrect outcomes can be reversed

In simple terms:

The system can rewind.

If an operator detects an issue, they can instruct the agent to roll back the operation.

The platform unwinds the logic step-by-step. In the next set of articles, we will talk about Reliability with … guaranteed execution for durable workflows.

The Internal Audit

Before you move on, I’d say: pause for a moment.

Screenshot the question below and share it in your team slack. The discussion it triggers will be far more revealing than any AI roadmap out there.

What’s our rollback strategy if an AI agent executes the wrong workflow in production?

This shift is exactly why we built Zenera the way we did.

If you’re a founder, CTO, or VP Product who’s tried to deploy AI into real software and felt the friction I just talked about, just reply to this email, let’s talk.

You can get a feel for the Zenera platform where software is dynamically built and maintained at https://zenera.ai/introvideo

Signing off,

Ramu Sunkara

Co-founder,

CEO at Zenera AI