Every few weeks, the AI industry finds a new shiny object.

A new model launches.

A new agent framework appears.

A benchmark chart shows a dramatic jump in reasoning.

Within days, LinkedIn is full of hot takes. Within weeks, enterprises are re-evaluating their roadmaps. The narrative is always the same: ‘This changes everything.’

In reality, very little changes where it matters most.

Most AI breakthroughs are demonstrated in controlled conditions with clean prompts, structured inputs., clearly defined tasks, and stable data.

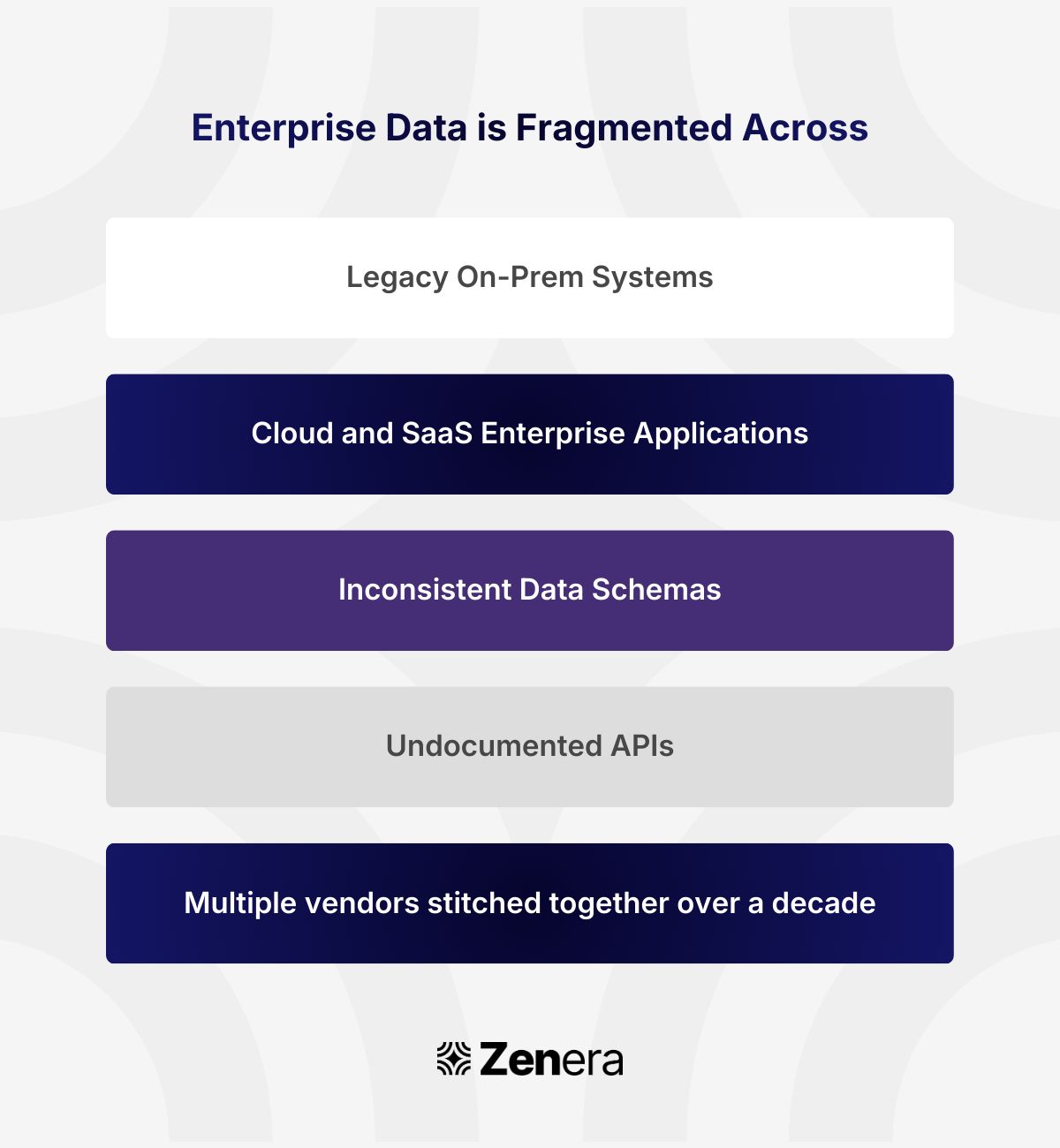

But enterprise systems do not look like that. They are fragmented across:

And layered on top of that is something even more complex: human judgment, informal processes, escalation paths, compliance requirements, and knowledge that never made it into documentation.

Because of this messy environment, AI initiatives encounter friction.

The Story No One Publishes

A few weeks ago, I met with the CTO of a large enterprise that had ambitious plans to embed AI across hundreds of workflows.

On paper, the strategy was perfect. Strong models, good teams and budget.

But they failed badly..

Why?

Because each deployment felt like starting from zero. The issue was not intelligence. It was heterogeneity.

When systems are built assuming clean, uniform data, they collapse under real-world variance.

One of the most underestimated barriers to enterprise AI is Heterogeneity.

Waiting for ‘perfect data’ is a fantasy.

The reality is that enterprises must operate with imperfect, evolving, and sometimes contradictory information. This gap is what makes or breaks ROI.

Chasing the new shiny object like model upgrades without addressing environmental complexity only increases integration fatigue.

The organization feels busy, but operational value doesn’t move at all.

To me, an AI initiative can only succeed if it has these components :

Intelligence lives inside workflows, not outside them.

Systems can reason across heterogeneous applications / data sources.

Constraints are explicit and enforceable.

Domain experts can directly interact with the system to correct, override, and refine decisions.

Every AI decision is explainable and traceable.

The system can evolve, adding new logic or fine-tuning workflows without starting from scratch.

Architecture is model-agnostic, so new models become upgrades, not rebuilds.

When these foundations exist, new models become upgrades, not disruptions. Without them, every new release resets the system.

The Hard Truth

The biggest risk to enterprise AI right now is building fragile systems that cannot survive heterogeneity. If your AI strategy assumes clean data, uniform systems, and stable workflows, it will fail.

The teams that will win this decade are not the ones chasing the next shiny object.

They are the ones designing for messy reality, and building intelligence that works inside it.

If you’re a founder, CTO, or VP Product who has tried to deploy AI into Enterprise and agree with the thesis, just reply to this email, let’s talk.

Signing off,

Ramu Sunkara

Co-founder,

CEO at Zenera AI